Overcoming the Challenges of developing 3D LiDAR applications

There are many difficulties involved in employing LiDAR sensors, processing LiDAR data, and developing new LiDAR applications. Learn how to overcome them.

Numerous obstacles arise while integrating LiDAR into an application, but the majority of them may be overcome with the right solutions.

We will talk about the specifics of the solutions available as well as the benefits and drawbacks of each one.

The challenges of using LiDAR sensors

Here are the key issues that come with using LiDAR:

The array of choices can be a source of frustration for developers.

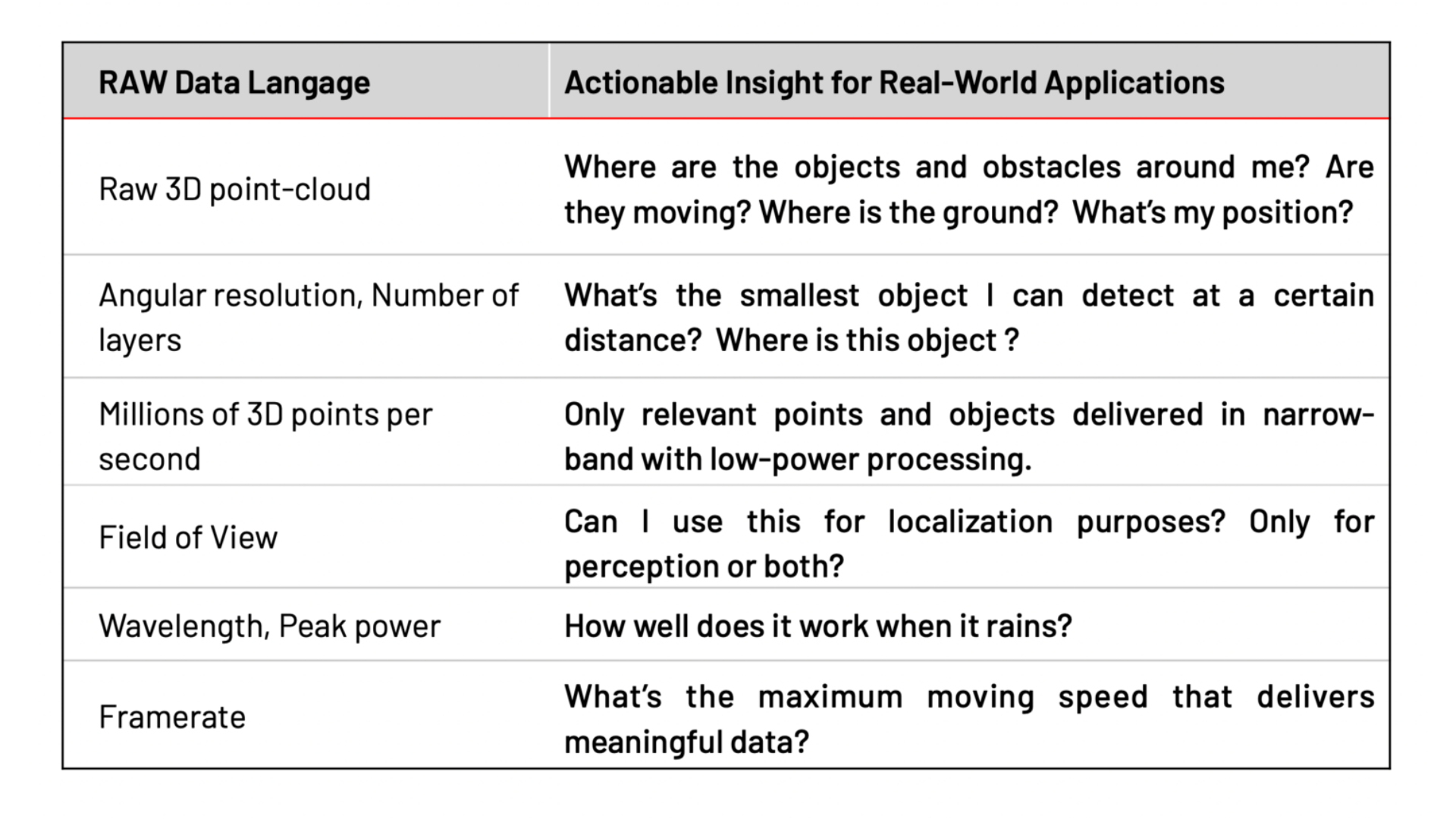

In addition, the information provided by manufacturers, including points per second, field of view, frame rate, and more, does not easily translate into actionable metrics for the end application.

Therefore, as a solution developer you may determine incorrectly which fit is ideal for your project.

For instance, you could get to the wrong conclusion that a costly sensor with an overly high resolution can be used instead of a similar-functioning, cheaper sensor with a lower resolution from the same manufacturer.

Since LiDAR is a young industry, it lacks established standards.

Each LiDAR sensor uses a unique data format and network interface, and each of them requires a specific driver to decode the data, as well as different SDKs or frameworks.

In practice, that leads to limiting the number of manufacturers that are evaluated, likely resulting in missing better and/or cheaper alternatives.

Point cloud data is complex to interpret in real-time without expert help.

The raw point cloud data is sparse and abstract, requiring significant resources and expertise to turn it into information that can power useful applications.

The resulting applications can thus miss LiDAR’s true potential, or developers can come to the wrong conclusion that LiDAR is not yet at the necessary level of performance.

Each manufacturer’s sensor uses different data formats, voltage levels, connectors, and network transport protocols.

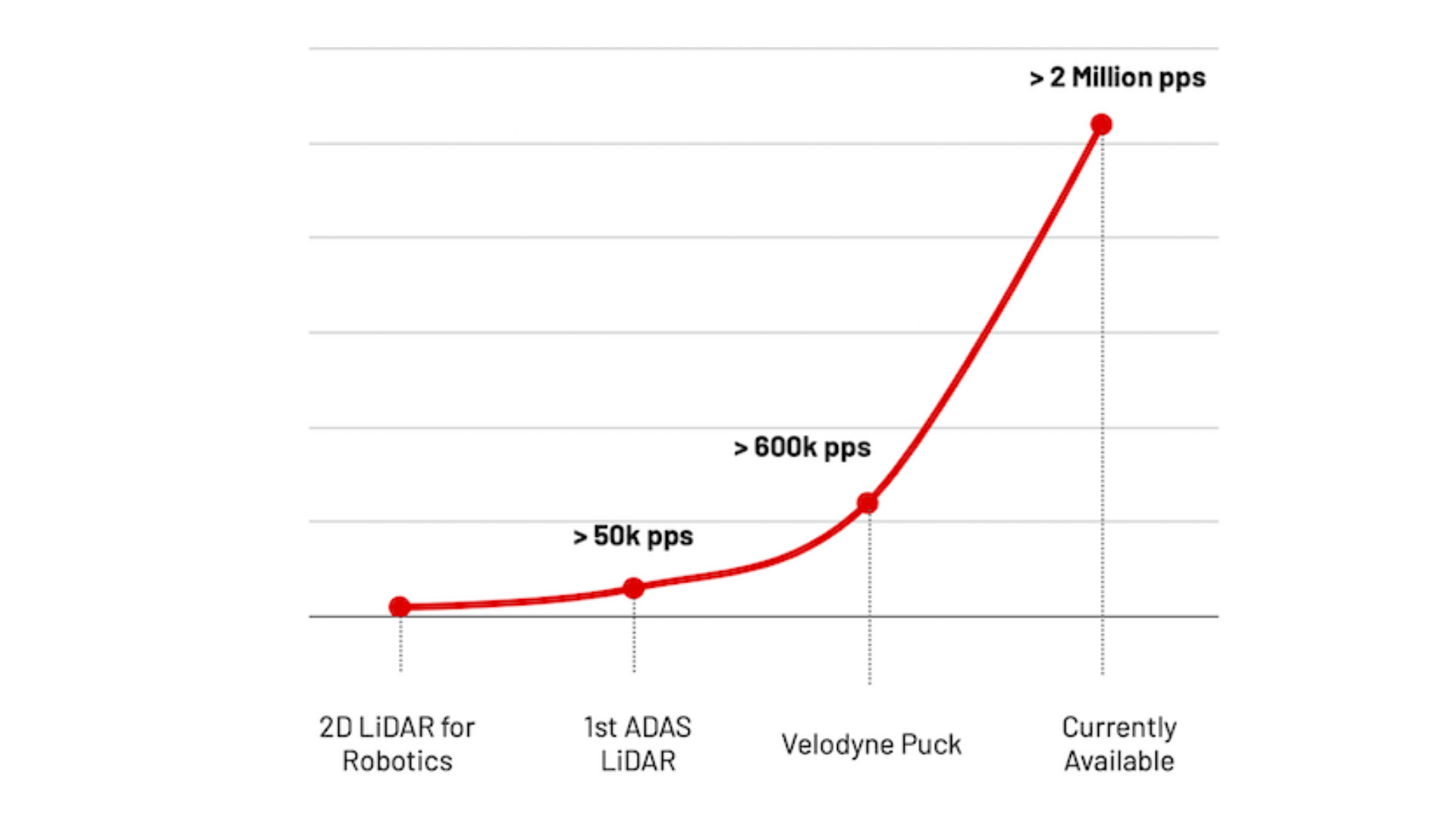

The ever-increasing resolution of newer LiDARs adds to the challenge, creating a massive amount of 3D data that needs to be transmitted and processed.

As a direct consequence, application developers must use expensive, high-end computing platforms, specific wiring, and wide bandwidth connections.

When this is not acceptable or possible, they may even give up using LiDAR in their applications, which shortchanges them of the extensive benefits that this technology brings.

Most LiDAR systems aren’t designed to handle over-the-air updates and improvements. Besides, most of them are not compatible with models from different generations, even if they’re from the same manufacturer.

While this was acceptable in the early phase of prototyped LiDAR technology, it could pose compatibility problems with large-scale professional deployments.

When an application can’t be performed by a single sensor, integrators and developers must merge data that originates from several of them.

LiDAR data occasionally needs to be integrated with images from cameras, radar, and other sensors, which necessitates dealing with calibration, synchronisation, and other networking complexities and delays the development of the real application.

When combining sensors from several manufacturers is the most effective way to handle the issue at hand, the integration of many sensors might become even more difficult.

That is frequently the best option because each LiDAR provider employs distinct underlying technology approaches, each of which has advantages and disadvantages (e.g. wavelength, scanning vs. flash, fixed pattern vs. dynamic).

This is especially true when you want to monitor a wide area, such as the entrance of an airport terminal (360 degrees and ceiling-mounted sensors are better) while simultaneously monitoring certain aisles at the same premises (narrow FoV LiDARs are better).

The challenges of manipulating LiDAR data

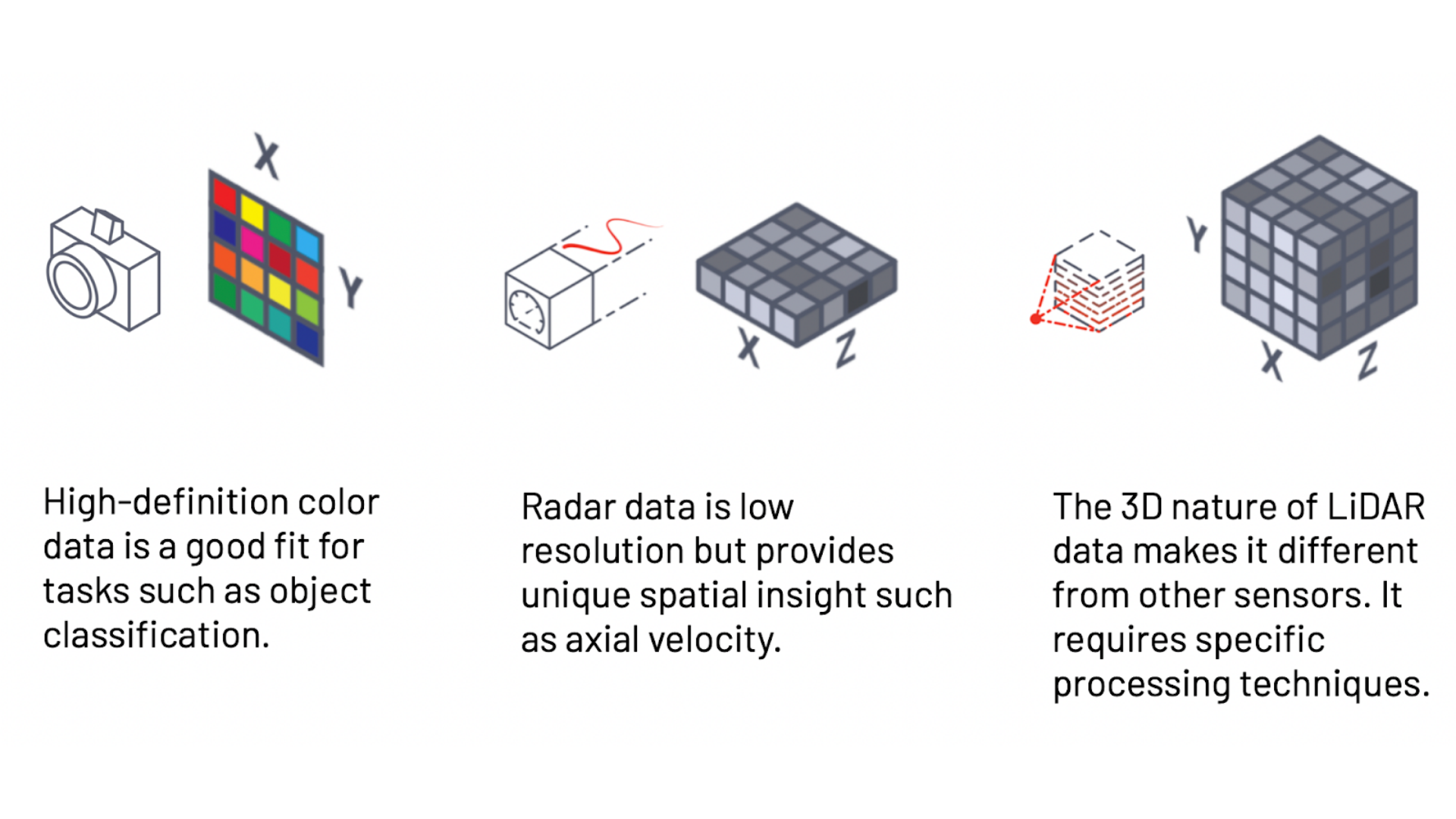

Most of the latest advancements in Computer Vision, including Machine Learning, are related to 2D image data, which cannot be directly applied to 3D LiDAR.

The Points per Second (pps) metric defines how many laser pulses are sent by the sensor and is a convenient way to measure the progress of LiDAR technology, as it directly translates to resolution and doesn’t depend on other factors such as FoV and frame rate.

The amount of 3D data to be processed is growing very quickly.

3D data is complex to interpret and use because it is sparse and fundamentally different from the 2D RGB images that Computer Vision specialists are used to working with.

That becomes even harder when you take into account moving platforms, such as mobile robots, and the massive amounts of data that newer sensors provide.

Modelling 3D data as RGB-D has been wrongfully considered a quick-win approach, with “D” standing for the depth dimension that could be considered as an additional color channel by traditional image processing pipelines.

Although this corner-cutting method allows you to recycle 2D-based techniques and get quick results, it’s not suited for anything but the simplest use cases. Thus, the techniques required to process 3D data must be specific to the nature of the data.

The challenges of creating new LiDAR applications

3D LiDAR for real-time operations was designed in the context of developing new technologies with long-term objectives such as autonomous robots and smart cities.

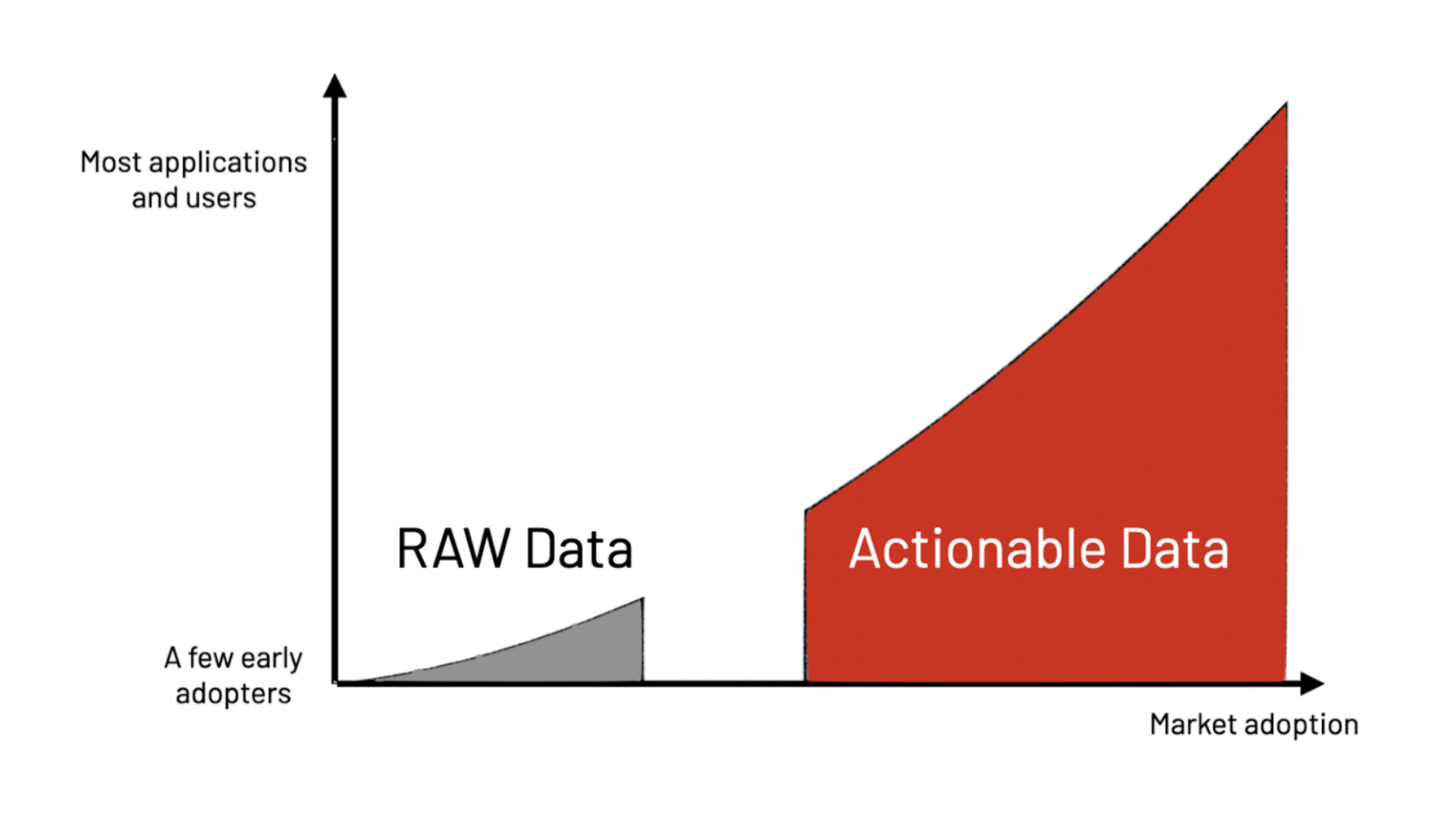

Making real-time LiDAR earlier usable in mature, business-driven applications, leveraging 3D spatial data, requires delivering actionable insight.

In order to differentiate themselves, LiDAR manufacturers mainly provide technical specifications like angular resolution, range at different reflectivity percentages, field of view, frame rates, and many more.

Early adopters of LiDAR, like the first autonomous driving engineers, appreciated this level of raw data information.

However, in most other applications, LiDAR technology is irrelevant per se.

Top professionals in Smart Infrastructure, Robotics, and Industrial Applications in mature markets focus on the problems that LiDAR technology can help to solve.

Actionable data is what is required in practical, real-world use cases.

Overall, these issues make integrating LiDAR into new applications a frustrating experience for even the most seasoned teams.

This is particularly true in the majority of applications where LiDAR data processing needs to be done in real-time, in contrast to some traditional use cases (such as mapping) that can live with the delay associated with post-processing approaches.

Furthermore, if the developer has created an internal solution, upgrading the LiDAR hardware in the future will be challenging because the internal solution is probably very closely tailored to this particular LiDAR.

Navigating the challenges of LiDAR integration

In the market, there are three possible paths to combat the complexity that comes with LiDAR: the Do-It-Yourself (DIY) approach, the engineering services approach, and the LiDAR preprocessing approach.

While some solutions may offer greater flexibility and autonomy, they may also be quite expensive and take a long time to implement in comparison to other alternatives.

Here are the specifics:

The first solution is the DIY approach—hiring a team of experts to build an in-house full-stack software solution to integrate.

The estimated cost and development time of an application-specific full-stack LiDAR solution depends on the level of features and required performance.

The Pros:

- This approach gives you, the application developer, almost complete control.

- It provides freedom to build custom solutions that fit your application, which would be expensive if it were built by an engineering services company.

- It allows you to create intellectual property (IP) for your company, and the developed solutions can become a differentiating factor for your company in the market.

The Cons:

- It can be hard to find and retain talent, which translates to long delays in building the solution.

- It can be expensive to build and maintain an internal team since LiDAR experts are rare and increasingly sought after.

- It is difficult to guarantee the successful development of a LiDAR solution, as the technology is highly complex and constantly evolving, with a risk of never reaching completion.

- It takes a great deal of time to go from a demonstrator to a product after identifying and eliminating bugs, performance issues, and inefficiencies, which could cause delays to long-term plans and visions.

- An internally designed solution may not measure up to currently available solutions by the time it is released, rendering it non-competitive and a waste of time and capital.

- The choice to build a solution internally can distract the team from focusing on other value-added tasks that satisfy customer needs and create application-specific differentiation in the market.

- It can be difficult or impossible to leverage what other industries with similar needs have learned over the years or are currently developing.

The second solution is the engineering services approach. Partnering with an Engineering Services provider can be a good alternative to in-house development.

The Pros:

- This approach can be less expensive as long as the objective is close to what the service provider has already built.

- You gain access to a team of experts who can leverage their experience from other industries and past projects.

- It is a time-based commitment that transitions to results-based pricing.

- In some cases, you may be the owner of the solution’s IP.

- There is a quicker time to market.

- Your in-house team can focus more on value-adding projects to complement the engineering services provider’s deliverables.

The Cons:

- Given the time and budget pressure, the partner may have a tendency to cut corners. This option generally comes with a cost to long-term success and maintainability.

- Other more important projects may demand more of their attention, and the best talent can be allocated away from your project.

- You may need to surrender valuable product or IP rights, which means that while you’ll be allowed to use the finished system, it’s not likely that you’ll hold IP ownership rights for your application.

- Very few companies can reliably provide the necessary level of expertise. Not many companies can assemble and maintain a competent team.

- Even when outsourced, this option requires significant time and resources to manage the provider, taking the attention of your engineering team away from their own projects or clients.

- If the solution is over-fitted for a certain hardware, its long-term durability can be compromised. Given that the sector is still in its infancy, the LiDAR market is extremely dynamic, making the position of the market leaders in LiDAR hardware today fragile.

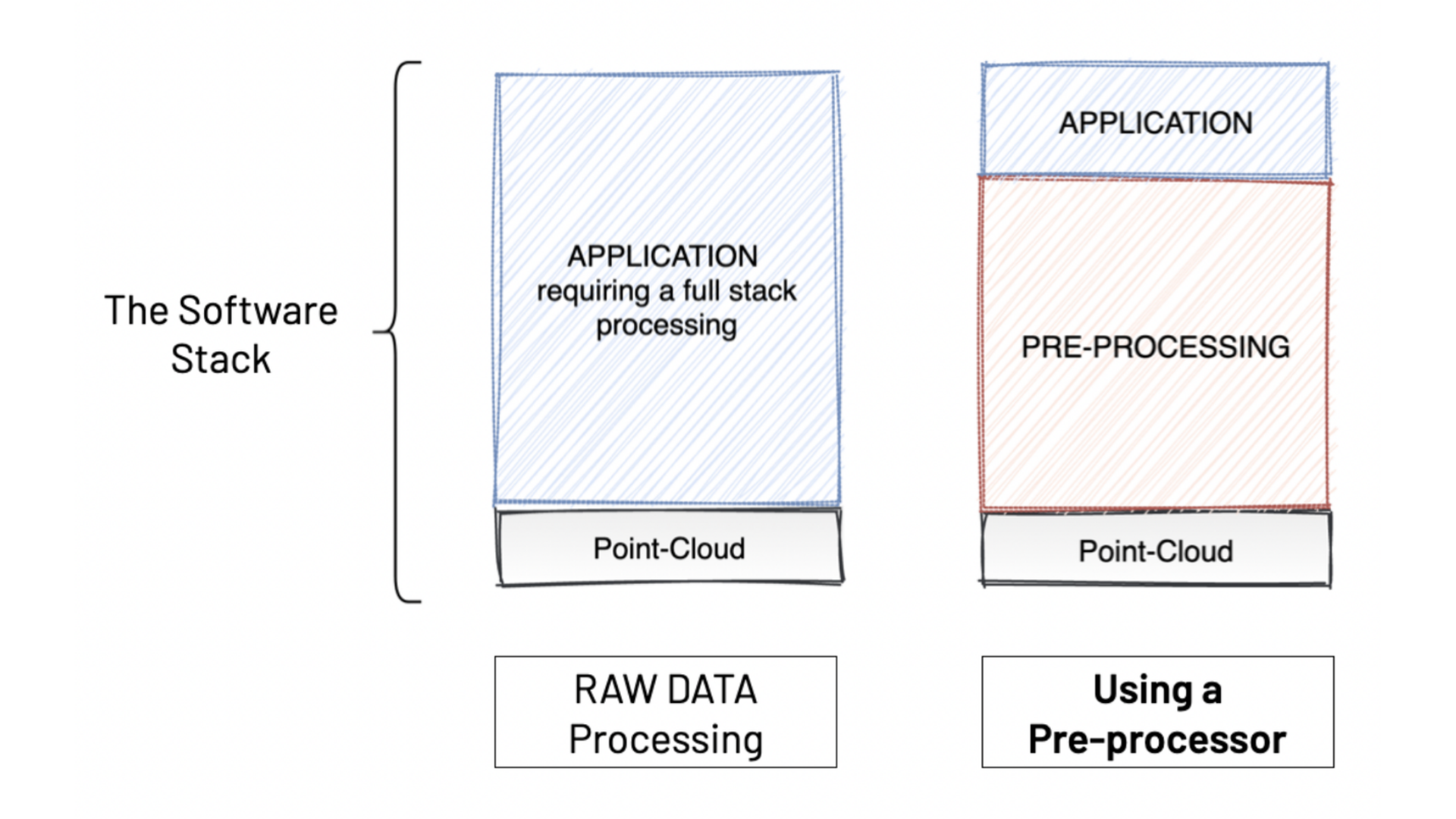

LiDAR preprocessing software provides a solution to these challenges, as it enables an easy way to use LiDAR in any application.

As mentioned previously, making use of the raw data provided by LiDAR sensors is challenging, and preprocessing software can eliminate that problem without compromising resources or taking higher risks, as the previous approaches do.

The Augmented LiDAR Box© is an Edge Processing device that embeds Outsight’s real-time software and has set the global standard for LiDAR preprocessing software.

As its name indicates, a preprocessor doesn’t replace the application-specific software, but facilitates and accelerates its development, as can be seen in the image below:

If you want to understand the basics behind what 3D LiDAR Preprocessor Software is and how it turns raw data into actionable information, check out our article What's a 3D LiDAR Preprocessor?.

Conclusion

Due to the complexity, unpredictability, and time needed to develop LiDAR-based solutions, many businesses are unable to commercialize their 3D-enabled products, which could reduce their competitive advantages and prevent new entrants from taking advantage of this amazing technology's unique advantages.

Fortunately, using LiDAR sensors, data, and technology is now possible using LiDAR preprocessing software.

We think that accelerating the use of LiDAR technology combined with scalable and user-friendly preprocessing would significantly assist in the development of game-changing solutions and products that will result in a smarter and safer world.

To find out more about how we tackle the biggest challenges in LiDAR integration, click here to read our White Paper.